Overview

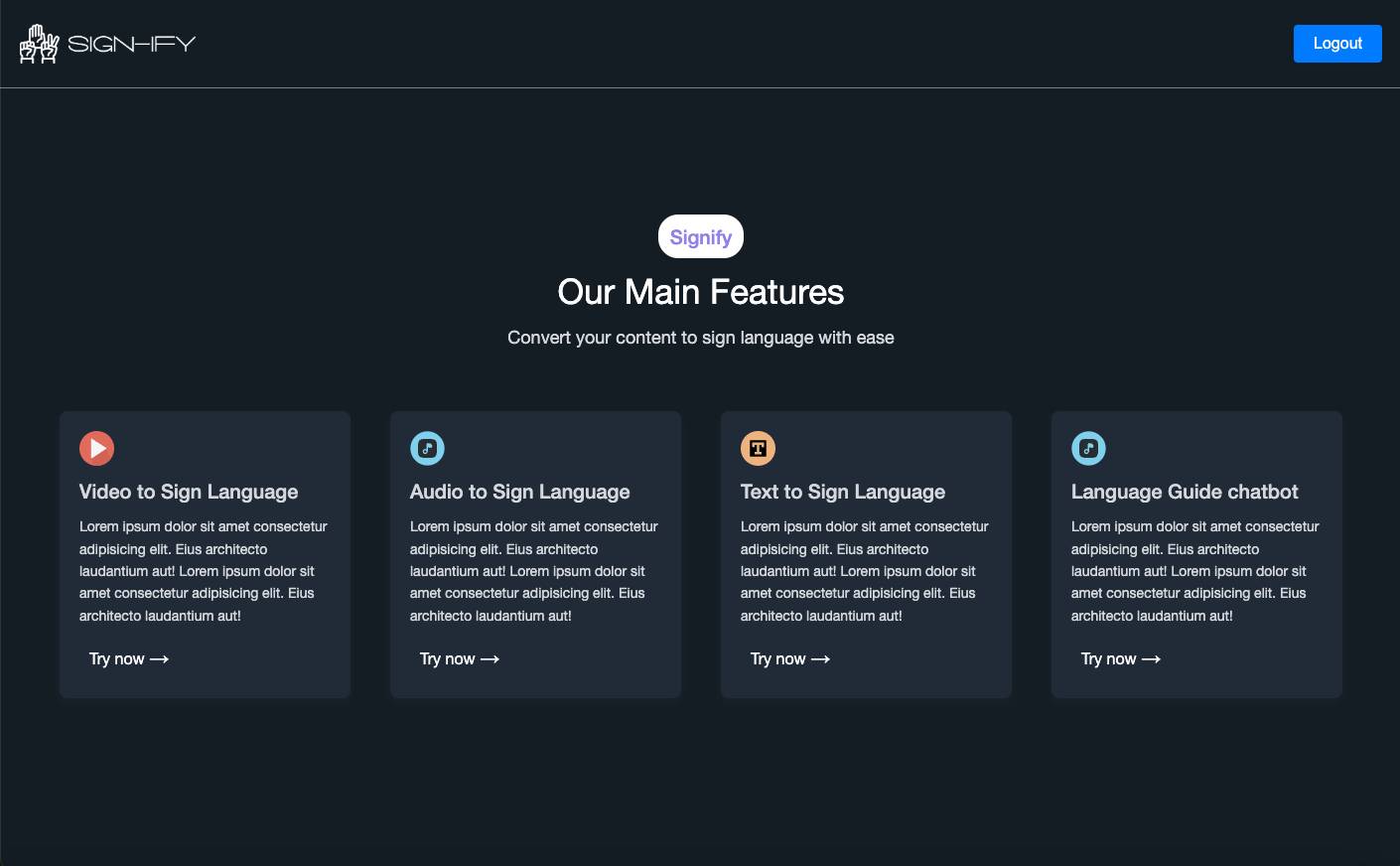

Sign-ify is a groundbreaking communication tool designed to bridge the gap between the hearing and deaf communities. By leveraging AI-driven speech-to-text and specialized translation models, the platform transforms multi-modal input (YouTube links, live audio, or plain text) into visual sign language representations.

My Role: Full-Stack AI Engineer

I led the development of the high-performance FastAPI backend and the integration of OpenAI’s conversational models. My focus was on ensuring low-latency processing for real-time audio-to-sign conversion and building a comprehensive analytics dashboard for tracking translation accuracy and user engagement.

Key Features

- 🎥 Multi-Modal Translation — Converts YouTube URLs, uploaded audio files, and raw text into sign language.

- 💬 OpenAI Chatbot Integration — A specialized assistant that provides insights and nuances about sign language grammar and culture.

- 📊 Analytics Dashboard — A robust admin interface to monitor system performance and usage metrics.

- 🎙️ Speech-to-Sign — Real-time processing using advanced AI Speech-to-Text (STT) and Text-to-Speech (TTS) pipelines.

- ⚡ FastAPI Backend — Optimized for high-concurrency and seamless data flow between the AI models and the frontend.

Tech Stack

- Frontend: React.js, Tailwind CSS

- Backend: FastAPI (Python), Node.js

- AI Services: OpenAI API (GPT-4), Whisper (STT), Google/Azure TTS

- Data: YouTube Data API, Custom Sign Language Libraries

- Deliverables: Full-Stack Development, AI Bot Development, Speech-to-Text Integration

Why I Built This

The goal was to make digital content universally accessible. While captions are a great start, they don’t always capture the full linguistic nuance of sign language. Sign-ify was built to provide a more native communication experience for the deaf community, making video and audio content truly inclusive.

Empowering communication through visual AI.